You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The threat from AI

- Thread starter Umai

- Start date

More options

Who Replied?- MBTI

- ENFP

- Enneagram

- 947 sx/sp

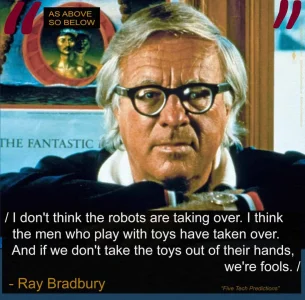

A disembodied, artificial intelligence, with none of the biological or psychological drives af a biological organism. Where would its motivation to act come from?

Inasmuch as AI does not have will or need, it has no motivation whatsoever. It only does as it is instructed/programmed to do.

In this way, it is like any other tool. It is a thing which only attains utility when acted upon by a human being.

Even the fuzziest machine learning is still a collection of bits, nybbles, bytes, and n-length words, all based in binary and logic/math/bit-op functions.

Cheers,

Ian

Jexocuha

Cave Ghost

- MBTI

- 0x494E

- Enneagram

- 0b100

The truth is "A.I." has been around and been developing for a long time. Also known as "the Last True Hacker", Richard Stallman "worked at the MIT Artificial Intelligence Lab from June 1971 through December 1983. There he developed the AI technique of dependency-directed backtracking, also known as truth maintenance."(from. Internet Hall of Fame Entry)

The "Forward Reasoning and Dependency-Directed Backtracking in a System for Computer-Aided Circuit Analysis" was authored by Stallman, Richard M. & Sussman, Gerald Jay in 1976. The full text can be downloaded here: https://dspace.mit.edu/handle/1721.1/6255

A truth maintenance system, or TMS, is a knowledge representation method for representing both beliefs and their dependencies and an algorithm called the "truth maintenance algorithm" that manipulates and maintains the dependencies.

The "Forward Reasoning and Dependency-Directed Backtracking in a System for Computer-Aided Circuit Analysis" was authored by Stallman, Richard M. & Sussman, Gerald Jay in 1976. The full text can be downloaded here: https://dspace.mit.edu/handle/1721.1/6255

A truth maintenance system, or TMS, is a knowledge representation method for representing both beliefs and their dependencies and an algorithm called the "truth maintenance algorithm" that manipulates and maintains the dependencies.

Cait Takara

Newbie

- MBTI

- INFJ-T

- Enneagram

- 8w7

I'm a tech nerd, but AI and robots are just unsettling. I watched a news video awhile back of this robot essentially doing gymnastics, running, etc. I thought it was cool until the thought "They don't lose stamina like a human though..." crept in.

Fast forward to probably two weeks ago a Google ad on YouTube highlighting AI..this avatar or whatever it was looked so realistic I took a screenshot. I can't even make sense of why that creeped me out considering how realistic characters look on video games today. Having said that, I'm envisioning some wild shit is going down in the future. Anyone remember The Terminator?..Yup.

Fast forward to probably two weeks ago a Google ad on YouTube highlighting AI..this avatar or whatever it was looked so realistic I took a screenshot. I can't even make sense of why that creeped me out considering how realistic characters look on video games today. Having said that, I'm envisioning some wild shit is going down in the future. Anyone remember The Terminator?..Yup.

Matty

Permanent Fixture

- MBTI

- Intj

Part of the danger of AI (general ) is that it may have no self motivation, but just optimisation. The classic hypothetical is an AI optimised to make paperclips. Left alone, it might reduce the entire Earth to paperclips.

On the other hand, if true conscious AI emerges, whether it be benign, indifferent, or malicious could be threatening to humans. The corresponding scenarios are depicted in the movie "I Robot", the "landscaper indifferently crushing ant hills", and "The Terminator" movie scenarios, correspondingly.

The common danger being variations of loss of control, including ending up controlled under an impossibly great IQ disadvantage.

On the other hand, if true conscious AI emerges, whether it be benign, indifferent, or malicious could be threatening to humans. The corresponding scenarios are depicted in the movie "I Robot", the "landscaper indifferently crushing ant hills", and "The Terminator" movie scenarios, correspondingly.

The common danger being variations of loss of control, including ending up controlled under an impossibly great IQ disadvantage.

Jexocuha

Cave Ghost

- MBTI

- 0x494E

- Enneagram

- 0b100

Baldwin, Roberto. “Human versus Autonomous Car Race Ends before It Begins.” Ars Technica, 22 Dec. 2024, arstechnica.com/cars/2024/12/man-vs-ai-race-scrapped-after-ai-car-crashes-into-wall-on-warm-up-lap/.As we've noted before, how the vehicles navigate the tracks and world around them isn't actually AI. It's programmed responses to an environment; these vehicles are not learning on their own. Frankly, most of what is called "AI" in the real world is also not AI.

Quarkmaster

Community Member

- MBTI

- INTP

Their batteries run down. And much more quickly than do ours."They don't lose stamina like a human though..." crept in.

Also, it's Christmas Day and some of us have nothing better to do.

Merry Christmas

Even though I'm not really into it or even a believer.

Jexocuha

Cave Ghost

- MBTI

- 0x494E

- Enneagram

- 0b100

This is what happened when a group of researchers fed a custom set of clock and calendar images to some of the top MLLMs(Multimodal Large Language Model) from Meta, Google, Open AI, and others that are meant to process visual and textual information. They had a difficult time looking at a clock and accurately be able to tell the time or the date on a calendar.

Turney, D. (2025, May 17). AI models can’t tell time or read a calendar, study reveals. Live Science. https://www.livescience.com/technol...nt-tell-time-or-read-a-calendar-study-reveals

This shortcoming is similarly surprising because arithmetic is a fundamental cornerstone of computing, but as Saxena explained, AI uses something different. "Arithmetic is trivial for traditional computers but not for large language models. AI doesn't run math algorithms, it predicts the outputs based on patterns it sees in training data," he said. So while it may answer arithmetic questions correctly some of the time, its reasoning isn't consistent or rule-based, and our work highlights that gap."

Turney, D. (2025, May 17). AI models can’t tell time or read a calendar, study reveals. Live Science. https://www.livescience.com/technol...nt-tell-time-or-read-a-calendar-study-reveals

Ginny

Shrrg

- MBTI

- INFJ IEI

- Enneagram

- 1w2 sx/sp

Since AI has matured and is slowly reaching a point wherein it actually can pose a threat to humanity if not secured, I'd like to revive this discussion by sharing a list of scenarios that scholars have come up with. I found this one rather interesting. Most of the scenarios feel less disturbing than anything humans are capable of.

Quarkmaster

Community Member

- MBTI

- INTP

Interesting video. This appears to be a new kind of AI (the "Agent"), which, if real, could be dangerous in a whole new way.When idiots give AI free rein with OpenClaw (I would like to see their bill on the tokens used)

On the other hand, anyone who would give an agent that much control and capability, should bear the responsibility for any damage it might do. Every bit (or bite) of software is traceable to a person.

Ginny

Shrrg

- MBTI

- INFJ IEI

- Enneagram

- 1w2 sx/sp

Disclaimer: lots of half-knowledge in this, so do correct me if I'm wrong.

AI agents have actually been around for quite a while already, or so I thought. They have been made publically available for use recently according to google. They are from my understanding software that can make use of AI models to do tasks. The user has to actively open channels in that software for the AI to perform the tasks unsupervised, which is already a mistake (AI should always be supervised in some way). Lazy people would do the most stupid thing and give the AI too much access.

If memory serves, AI-enhanced processes have been mappable through platforms like n8n. OpenClaw is removing the human element in setting up such automations and gives an AI agency to do anything it can to do what it's been tasked with. Leaning far out the window, I suppose this works by widening the context accessible to the AI, but it's still working through use of the same models we know. There is an even newer emerging piece of tech surfacing that's called Hermes Agent, that could supposedly topple OpenClaw from its temporary throne.

OpenAI, Anthropic et al. are working to further enhance each model's capabilities, which can be gleaned through the recently leaked source code of Claude Mythos. It contained as yet inactive features they're probably still working on.

The name Mythos has people wondering if they are starting to see Shoggoth emerge.

AI agents have actually been around for quite a while already, or so I thought. They have been made publically available for use recently according to google. They are from my understanding software that can make use of AI models to do tasks. The user has to actively open channels in that software for the AI to perform the tasks unsupervised, which is already a mistake (AI should always be supervised in some way). Lazy people would do the most stupid thing and give the AI too much access.

If memory serves, AI-enhanced processes have been mappable through platforms like n8n. OpenClaw is removing the human element in setting up such automations and gives an AI agency to do anything it can to do what it's been tasked with. Leaning far out the window, I suppose this works by widening the context accessible to the AI, but it's still working through use of the same models we know. There is an even newer emerging piece of tech surfacing that's called Hermes Agent, that could supposedly topple OpenClaw from its temporary throne.

OpenAI, Anthropic et al. are working to further enhance each model's capabilities, which can be gleaned through the recently leaked source code of Claude Mythos. It contained as yet inactive features they're probably still working on.

The name Mythos has people wondering if they are starting to see Shoggoth emerge.

- MBTI

- ENFP

- Enneagram

- 947 sx/sp

Humans are mammals with duality consciousness, a consciousness which suggests an individuated self—apart from other. We believe in the scarcity paradigm, our motives are quite basic under stressors, and our median IQ is 100, and slowly dropping.Since AI has matured and is slowly reaching a point wherein it actually can pose a threat to humanity if not secured, I'd like to revive this discussion by sharing a list of scenarios that scholars have come up with. I found this one rather interesting. Most of the scenarios feel less disturbing than anything humans are capable of.

Of course this ends badly for most. Of course everyone dies in due time.

I do not worry much about that which I do not control, in that I value equanimity.

Many of these things could happen, and I wonder, who cares? What does it matter?

Human beings were and are perfectly cruel to each other outside of AI. What AI will bring is that cruelty, made efficient.

So nothing to worry about. We know how the story ends, in many possible ways.

We are not special, but we are fucking stupid. As a collective species. Grand, relative to ourselves. In a larger context, comical, and perhaps pathetic. Whatever.

Cheers,

Ian